| Quantity | 3+ units | 10+ units | 30+ units | 50+ units | More |

|---|---|---|---|---|---|

| Price /Unit | $744.29 | $729.10 | $706.32 | $675.94 | Contact US |

SOARM101-Pro Opensource 6DOF Mechanical Arm DIY Kit 30kg.cm AI Arm with 3D Printed Parts Compatible with Hugging Face LeRobot

$449.55

SOARM101-Pro Opensource 6DOF Mechanical Arm DIY Kit 30kg.cm AI Arm with 3D Printed Parts Compatible with Hugging Face LeRobot

$449.55

SOARM101 Opensource 6DOF Mechanical Arm DIY Kit 19.5kg.cm AI Arm with 3D Printed Parts Compatible with Hugging Face LeRobot

$381.54

SOARM101 Opensource 6DOF Mechanical Arm DIY Kit 19.5kg.cm AI Arm with 3D Printed Parts Compatible with Hugging Face LeRobot

$381.54

XLeRobot SO-ARM101 Mobile Robot (Expansion Kit + 2 Leader Arms + 2 Follower Arms + Chassis + Cart + Jetson Orin Nano)

$2,296.50

XLeRobot SO-ARM101 Mobile Robot (Expansion Kit + 2 Leader Arms + 2 Follower Arms + Chassis + Cart + Jetson Orin Nano)

$2,296.50

Omni Wheel ROS Car Robot Car Assembled with Depth Camera Voice Module N10 Lidar LubanCat 1S

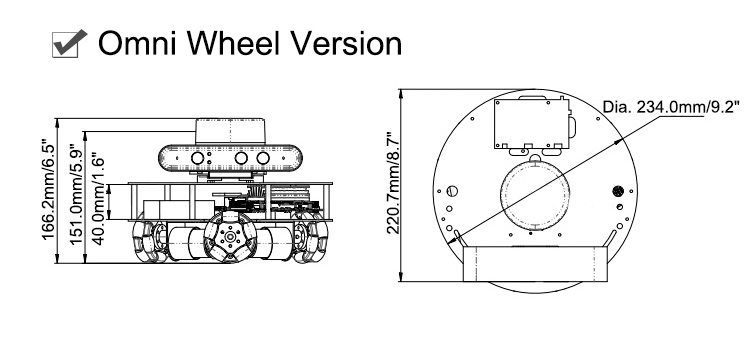

Omni Wheel Version:

- Classic omnidirectional construction with three metal omnidirectional wheels.

- Including car chassis, STM32 controller, battery, charger, lidar, 7" touch screen (detachable), depth camera, voice module and ready-to-use ROS system.

Description:

The R550 combines the ideal teaching performance and cost-effectiveness with quality. Equipped with accessories such as a radar and a depth camera, the R550 can be used for mapping and navigation, deep learning, 3D vision, robot formation and the like.

Features:

System images for ROS1 melodic, ROS1 noetic, and ROS2 galactic are available.

- Support multi-robot system

- Support cross-system platform

- Support real-time control

- Support microcontrollers. Compared with ROS1, the ROS2 system removes MASTER management at the application layer. The middle layer communicates based on DDS to enhance real-time performance, and the system layer increases the adaptation for Windows, MAC, and microprocessors.

Patented GMR high-precision encoder

Newly upgraded 500-line AB-phase GMR high-precision encoder. Its accuracy is more than 38 times that of Hall encoders (similar products on the market basically use Hall encoders). Equipped with a GMR high-precision encoder, the car has excellent performance when navigating at low speeds.

Parameters:

- Encoder type: GMR (Giant Magneto Resistance)

- Reduction ratio: 1:30

- Rated voltage: 12V

- Rated torque: 1kg.cm

- Rated current: 360mA

- Rated power: 4.32W

- Stall torque: 10kg.cm

- Stall current: 2.8A

Omni wheels

- The chassis with omni wheels has been upgraded with clamping coupling.

We have worked with Orbbec to create a community of 3D visual developers

The R550 robot is equipped with the achievements of engineers from Wheeltec and Orbbec in 3D visual exploration in the past year. Through the SDK layer development, we have realized advanced functions such as skeleton recognition and somatosensory interaction following.

Robot cluster control algorithm with independent intellectual property rights

Through the combination of the Navigator algorithm and our innovative algorithm, very stable robot cluster (formation) control is realized. This technology has been widely deployed in major universities in China. Users need to purchase at least three robots to achieve robot formation.

Key Functions (fully open source):

- Rtabmap vision and lidar 3D mapping and navigation: Support rtab pure visual mapping navigation, and support radar and visual fusion mapping navigation.

- Classic 2D lidar mapping, navigation and obstacle avoidance. Support for Gmapping, hector, karto, cartographer mapping, and support fixed-point navigation, multi-point navigation and obstacle avoidance in navigation.

- ORB visual mapping. ORB-SLAM2 is an open source SLAM framework that supports monocular, binocular, and RGB-D cameras. It calculates the camera's pose in real time and simultaneously reconstructs the surrounding environment in sparse 3D, and obtains realistic scale information in binocular and RGB-D modes.

- ROS QT function of graphical interface. Deploy the QT graphical interface to realize one-click ROS function. It provides visual feedback on the speed and electric quantity of the car.

- 3D reconstruction in real autonomous driving. The optional Leishen multi-line lidar can realize outdoor 3D mapping, which is a step closer to real automatic driving.

- Automatic parking with autonomous driving function. Through its own patented algorithm combined with deep learning, the robot fully automatic parking and storage is realized. This is a core capability of the autonomous driving industry.

- Keep the lane of the autonomous driving function. Through the core algorithm to identify the lane, the robot lane keeping is realized. This is a core capability of the autonomous driving industry.

- Steering decisions for autonomous driving functions. Comprehensive decisions will be made, through its own patented algorithm, deep learning and lane and traffic sign recognition. This is a core capability of the autonomous driving industry.

- Yolo traffic sign recognition: Teach you to train your own library of deep learning models. Simple autonomous driving functions are realized by RGB cameras.

- Yolo object and gesture recognition: Identify daily objects using common libraries of deep learning models.

- Ros_tensorflow object detection: Based on TensorFlow, it can realize the recognition of common objects and handwritten number recognition.

- Deep vision following: The robot follows by recognizing the distance and orientation of the object by the depth camera.

- KCF target tracking: Robot following is realized by object recognition with fixed features by depth camera.

- AR tag recognition and following: The depth camera recognizes and tracks the attitude of the AR tag, realizes the robot to follow through the AR tag, and expands the positioning of the AR tag.

- RRT independently explores mapping: Without artificially controlling the trolley, it uses the RRT algorithm to independently complete exploration and mapping, saves maps, and returns to the starting point of mapping.

- Web camera surveillance: The robot camera image can be directly viewed through any browser on the PC, and remote monitoring can be quickly deployed.

- RGB camera line following: By following the ground line with the RGB camera and the lidar, automatic obstacle avoidance can be realized during line patrol.

- Lidar follower: The robot scans nearby obstacles through lidar and selects the nearest object to follow.

- LiDAR angle shielding: Through SDK optimization, angle shielding can be achieved for all lidars.

- TEB and DWA path planning: Provide extremely detailed video tutorials. Through Python mini games, you will learn navigation path planning from scratch.

- Robot chassis kinematics analysis: We provide kinematic analysis of robot chassis of manufacturers in the market, including Ackerman, differential, crawler, mecanum wheel, omni wheel, and 4WD vehicles.

- Control board protection circuit: The electrical wiring of the industrial-grade four-layer board is more compliant. Real-time temperature control monitoring protection is realized by thermistor. Current sampling realizes over-current protection for hardware detection of motor stalls.

- Provide ROS APP for mapping and navigation: Control the ROS side through the APP. This can realize the control, mapping, navigation and other functions of the car movement.

- The bottom end provides a powerful parameter control APP. For Android and IOS. Support APP parameter adjustment, gravity sensor control, and waveform display.

Provide Source-level Video Tutorials (with Chinese and English subtitles):

- Deep learning video tutorial based on autonomous driving sandbox scenario

- Robotic video tutorial for moveit

- ROS SLAM principles and algorithms in detail video

- ROS basics thematic video tutorial

- STM32 underlying source code and ROS communication video

- ROS-related basic tutorials for ubuntu

- ROS function development code-level video tutorial (some videos with Chinese and English subtitles)

- ROS voice video tutorial

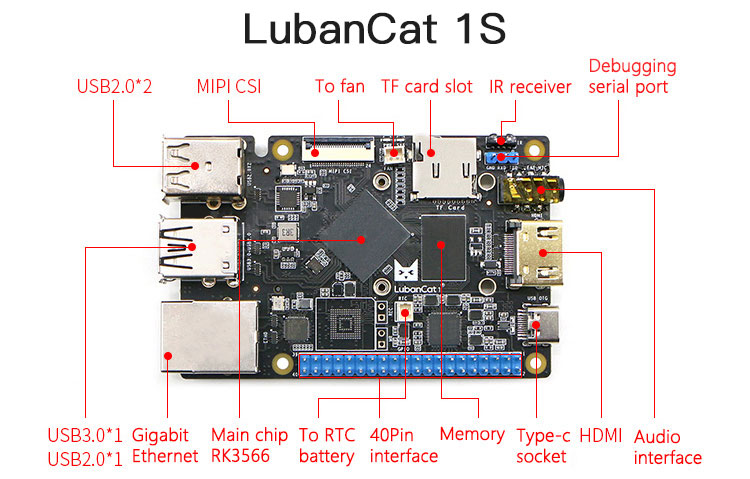

ROS Main Control Board for LubanCat 1S:

- CPU: For quad-core Cortex-A55, 1.8GHz

- GPU/NPU: The built-in independent NPU computing power can reach 1TOPS

- RAM: 2GB LPDDR4X

- USB interface: 1*USB3.0 + 3*USB2.0

- Image input: MIPI CSI

- Image output: 1*HDMI 2.0

- Video encoding: H.264/H.265 (1080p60)

- Video decoding: H.264/H.265 (4Kp60) VP9

- On-board storage: 32G Micro SD card

- Network interface: 10/100/1000M adaptive Ethernet port

- Number of GPIO pins: 40

- Rated Power: 15W (5V/3A)

- Power input: 5V

- Note: SD card is not included

- For ubuntu system

Leishen N10 Lidar Sensor:

- Measuring radius: 25m/82ft

- Scanning frequency: 10Hz

- Sampling frequency: 4500Hz

- Output: Angle, distance and light intensity

- Angular resolution: 0.8°

- Drive motor type: brushless motor

- 360° scanning ranging: Yes

- Interface type: Serial port

- Radar principle: TOF

Wireless Controller for PS2:

- Support the original controller for PS2 to control the robot car. Speed can be infinitely adjusted by users via the joystick.

Specifications:

- Version: Omni wheel

- Drive structure: three-wheel omnidirectional structure

- Wheels: 60mm/2.4" metal omni wheels

- Servos: None

- Dimensions: 240 x 240 x 183mm/9.4 x 9.4 x 7.2"

- Car weight: 2.18kg/4.8lb

- Load capacity: 3kg/6.6lb

- Maximum speed: 0.84m/s

- Endurance time (speed 0.45m/s with light load): 7.5h

- Endurance time (speed 0.45m/s with 1kg load): 5h

- Motor: MG513 gear motor with metal gears

- Encoder: 500-line AB-phase GMR encoder of high precision

- Control mode: APP, wireless controller for PS2, and CAN and serial port

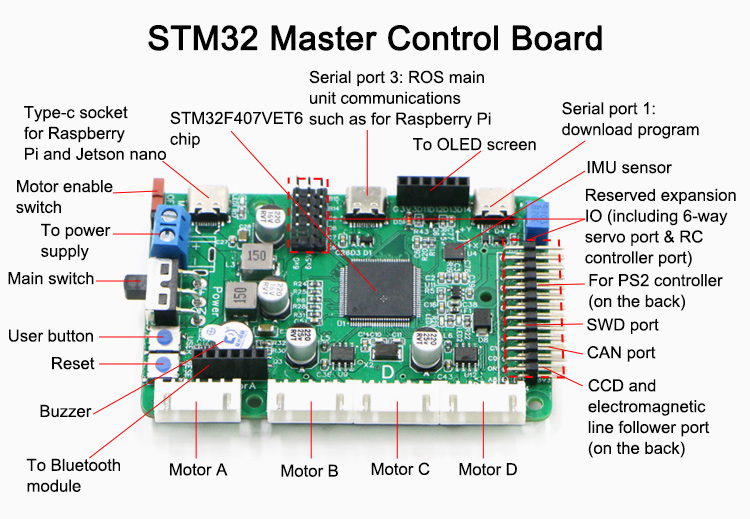

- STM32 master: STM32F407VET6

- Lidar: Leishen N10

- ROS main control: LubanCat 1S

- Depth camera: Astra series RGBD depth camera

- Operating system: ROS uses systems for melodic, noetic and ROS2 galactic; STM32 uses operation system for FreeRTOS

- Source: Video tutorials, ROS source code, STM32 source code and ROS mirror image

Battery Parameters:

- Multiple protection: over-discharge, overcharge, short-circuit and over-voltage protection

- Capacity: 12V 9800mAh

- Plug: T-type discharge plug

- Dimensions: 98.6 x 62 x 29mm/3.9 x 2.4 x 1.1"

- Weight: 0.36kg/0.8lb

Orbbec Depth Camera:

- Depth resolution: up to 640x480

- RGB resolution: up to 640x480

- RGB sensor field of view (HxV): 63.1°x49.4°

- Depth sensor field of view (HxV): 58.4°x45.5°

- Monocular structured light: monocular structured light + monocular RGB

- Depth frame rate: 640x480 up to 30fps

- RGB frame rate: 640x480 up to 30fps

- Depth range: 0.6-4m/2-13.1ft

- Dimensions (Dia. x H): 165 x 40 x 30mm/6.5 x 1.6 x 1.2"

- Data transfer interface: USB2.0 and above

- Due to hinge, the angle of the camera is adjustable

STM32 Master Control Board:

- Main control chip: 100-pin STM32F407, with good expansion performance

- Model airplane remote control interface: support

- Download interface: serial port one-click download or support SWD interface to download

- External power supply: dual 5V 5A

- Expansion interface: CAN, CCD, electromagnetic line follower, etc.

- GP10 reserved: dozens

- Reserved servo interface: 6-way servo interface (external 6DOF manipulator arm can be connected)

- Reserved serial port: lead out 4 serial ports

- Protection circuit: Over-temperature, short-circuit and over-current protection

- Number of board layers: Industrial grade 4-layer board

Servo Parameters:

- All-metal output shaft for longer servo life

- Model: S20F high-torque digital servo

- Gears: metal gears

- Voltage: 5-6V

- Weight: 62g/0.1lb

- Torque: 20kg.cm (5V); 23kg.cm(6.5V)

- Response speed: 0.18sec/60° (5V)

- Maximum angle: 180°

- Standby Current: 4mA (5V)

- Stall current: 1.8A (5V)

- Working dead zone: 4us

What's included in the package?

Omni Wheel Chassis:

12V30F MG513 motor x3

60mm/2.4" aluminum alloy omni wheel x3

Car metal base plate x1

Several standard parts and their connecting parts

37 motor bracket x3

Clamping coupling x3

Car metal upper board x1

Electronic Control:

STM32F407VET6 integrated main control board x1

Bluetooth module x1

OLED display x1

Wireless controller for PS2 x1

9800mH12V lithium battery x1

Lithium battery charger with protection x1

Data downloading cable x1

ROS Section:

Lidar x1

Depth camera and its angle adjustment mechanism x1

High-speed memory card and card reader x1

Voice module and touch screen x1

Thermal components

Several wires

Note:

- The robot car has been assembled and debugged before delivery. It is ready to use.